I've been pottering around a bit more with WebGL for a personal project/experiment, and came across a hurdle that I wasn't expecting, involving arrays. Maybe my Google-fu was lacking, but this doesn't seem to be widely documented online - and I get the distinct impression that WebGL implementations might have changed over time, breaking some older example code -so here's my attempt to help anyone else who comes across the same issues. As I'm far from being an expert in WebGL or OpenGL, it's highly likely that some of the information below could be wrong or sub-optimal - contact me via Twitter if you spot anything that should be corrected.

I want to write my own implementation of Voronoi diagrams as a shader, with JavaScript doing little more than setting up some initial data and feeding it into the shader. Specifically, JavaScript would generate an array of random (x,y) coordinates, which the shader would then process and turn into a Voronoi diagram. I was aware that WebGL supported array types, but I hadn't used them at all in my limited experimentation, so rather than jumping straight in, I thought it would be a good idea to write a simple test script to verify I understood how arrays work.

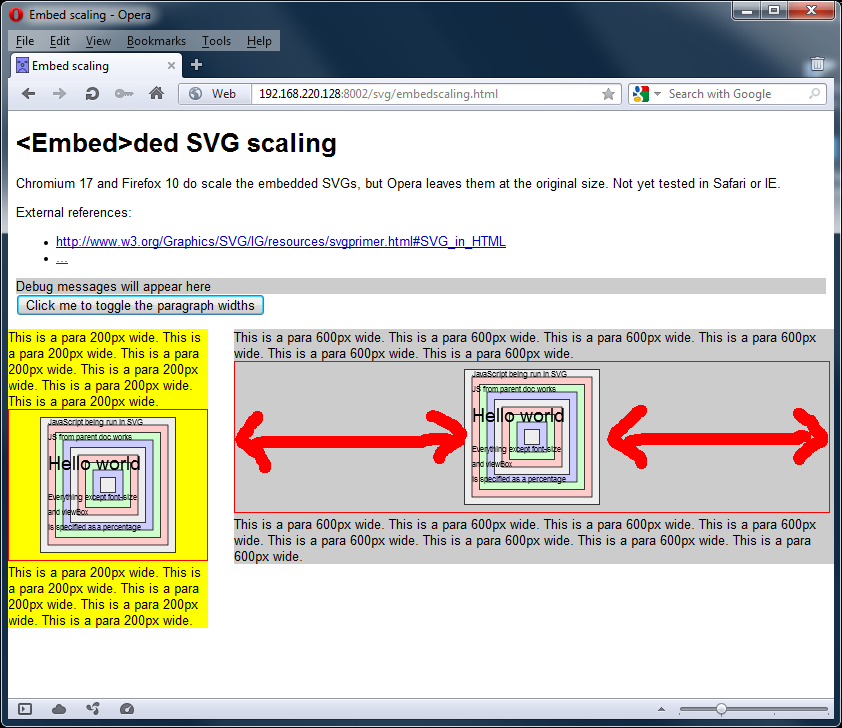

This turned out to be a wise decision, as it turns out that what would be basic array access in pretty much any other language, is not possible in WebGL. (Or OpenGL ES - I'm not sure where exactly the "blame" lies.) Take the following fragment shader code:

uniform float uMyArray[16];

...

void main(void) {

int myIndex = int(mod(floor(gl_FragCoord.y), 16.0));

float myVal = uMyArray[myIndex]

...

The intention is to obtain an arbitrary value from the array - simple enough, right?

However, in both Firefox 12 and Chromium 19, this code will fail at the shader-compilation stage, with the error message

'[]': index expression must be constant. Although I found it hard to believe initially, this is more-or-less what it seems - you can't access an element in an array using a regular integer variable as the index.

My uninformed guess is that this is some sort of security/leak prevention mechanism, to stop you reading memory you shouldn't be able to, e.g. by making the index variable negative or bigger than 16 in this case. I haven't seen any tangible confirmation of this though.

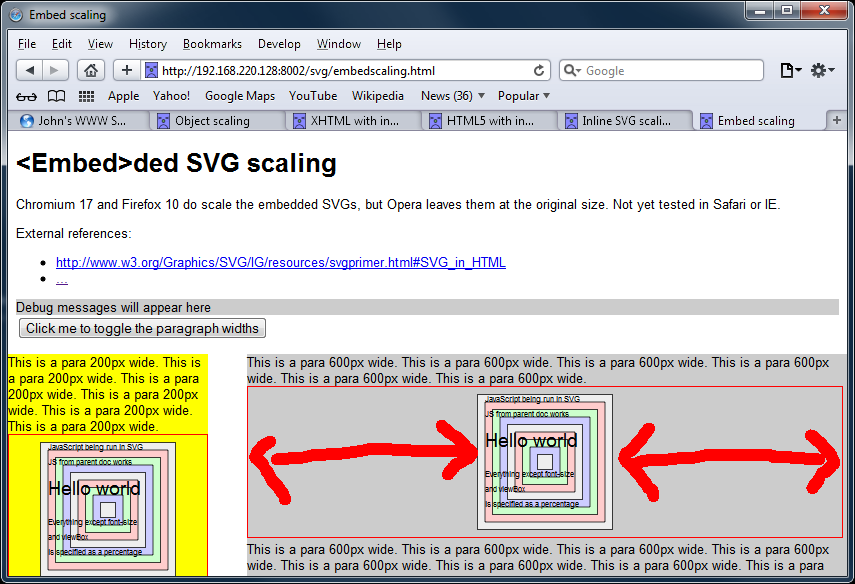

Help is at hand though, as it turns out that "constant" isn't quite the same as you might expect from other languages. In particular, a counter in a for loop is considered constant, I guess because it is constant within the scope of the looped clause. (I haven't actually tried to alter the value of the counter within a loop though, so I could well be wrong.)

This led me to my first way of working around this limitation:

int myIndex = int(mod(floor(gl_FragCoord.y), 16.0));

float myVal;

for (int i=0; i<16; i++) {

if (i==myIndex) {

myVal = myArray[i];

break;

}

}

This seems to be pretty inefficient, conceptually at least. There's a crude working example here, if you want to see more. Note that it's not possible to optimize the for loop - that statement also suffers from similar constant restrictions.

Whilst Googling for ways to fix this, I also found reference to another limitation, regarding the size of array that a WebGL implementation is guaranteed to support. I don't have a link to hand, but IIRC it was 128 elements - which isn't a problem for this simple test, but could well be a problem for my Voronoi diagram. The page in question suggested that using a texture would be a way to get around this.

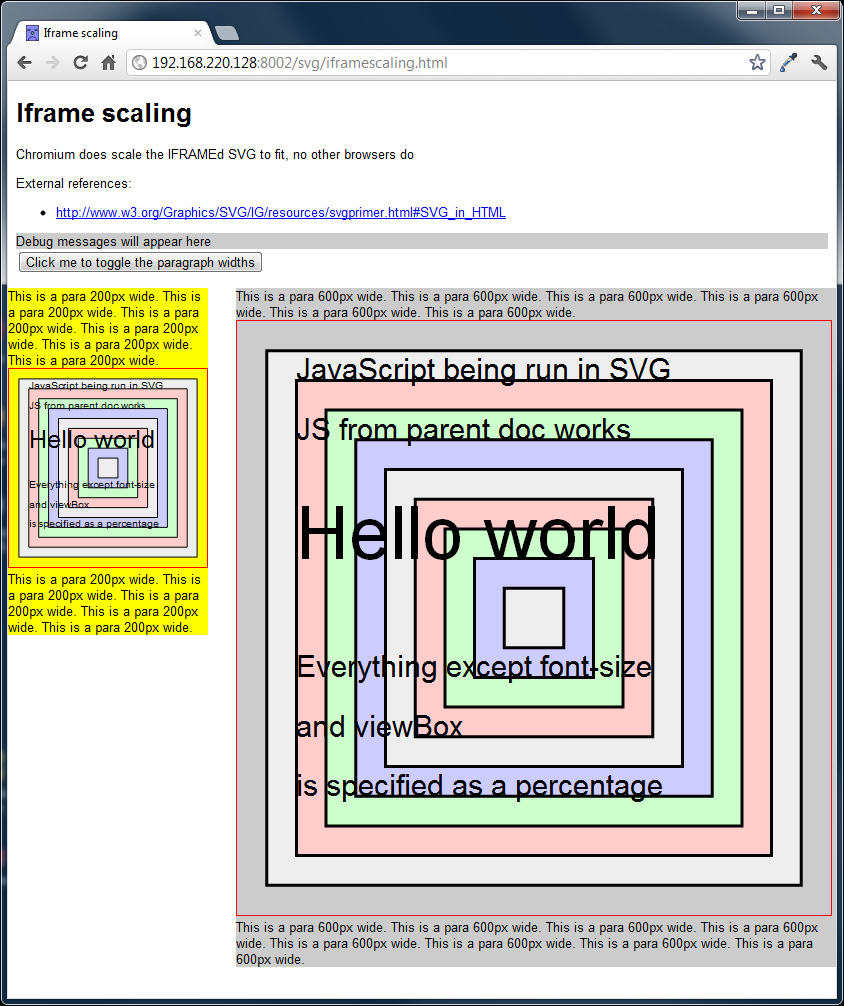

As such, I've implemented another variant on this code, using a texture instead of an array. This involves quite a bit more effort, especially on the JavaScript side, but seems like it should be more efficient on the shader side. (NB: I haven't done any profiling, so I could be talking rubbish.)

Some example code can be found here, but the basic principle is to

- Create a 2D canvas in JavaScript that is N-pixels wide and 1-pixel deep.

- Create an ImageData object, and write your data into the bytes of that object. (This is relatively easy or hard depending on whether you have integer or float values, and how wide those values are.)

- Convert the Sampler2D uniform. Note you (probably) won't need any texture coordinates or the usual paraphernalia associated with working with textures.

- In your shader, extract the value from the shader by using the texture2D function, with vec2(myIndex/arraySize, 0.0) as the second argument.

- As the values in the returned vec4 are floats in the range (0.0, 1.0), you'll probably want to decode them into whatever the original number format was.

To go into more detail, here are the relevant bits of code from the previously linked example>.

Creating our array of data

Firstly, we generate the data into a typed array:

function createRandomValues(numVals) {

// For now, keep to 4-byte values to match RGBA values in textures

var buf = new ArrayBuffer(numVals * 4);

var buf32 = new Uint32Array(buf);

for (var i=0; i < numVals; i++) {

buf32[i] = i * 16;

}

return buf32;

}

...

var randVals = createRandomValues(16);

This is nothing special, and is really only included for completeness, and for reference for anyone unfamiliar with typed arrays. Observant readers will note that the function name createRandomValues is somewhat of a misnomer ;-)

Creating the canvas

The array of data is then fed into a function which creates a canvas/ImageData object big enough to store it:

function calculatePow2Needed(numBytes) {

/** Return the length of a n*1 RGBA canvas needed to

* store numBytes. Returned value is a power of two

*/

var numPixels = numBytes / 4;

// Suspect this next check is superfluous as affected values

// won't be powers of two.

if (numPixels != Math.floor(numPixels)) {

numPixels = Math.floor(numPixels+1);

}

var powerOfTwo = Math.log(numPixels) * Math.LOG2E;

if (powerOfTwo != Math.floor(powerOfTwo)) {

powerOfTwo = Math.floor(powerOfTwo + 1);

}

return Math.pow(2, powerOfTwo);

}

function createTexture(typedData) {

/** Create a canvas/context contain a representation of the

* data in the suppled TypedArray. The canvas will be 1 pixel

* deep; it will be a sufficiently large power-of-two wide (although

* I think this isn't actually needed).

*/

var numBytes = typedData.length * typedData.BYTES_PER_ELEMENT;

var canvasWidth = calculatePow2Needed(numBytes);

var cv = document.createElement("canvas");

cv.width = canvasWidth;

cv.height = 1;

var c = cv.getContext("2d");

var img = c.createImageData(cv.width, cv.height);

var imgd = img.data;

...

...

createTexture(randVals);

The above code creates a canvas sized to be a power-of-two, as WebGL has restrictions with textures that aren't sized that way. As it happens, those restrictions aren't actually applicable in the context we are using the texture, so this is almost certainly unnecessary.

Storing the array data in the canvas

The middle section of createTexture() is fairly straightforward, although a more real-world use would involve a bit more effort.

var offset = 0;

// Nasty hack - this currently only supports uint8 values

// in a Uint32Array. Should be easy to extend to larger unsigned

// ints, floats a bit more painful. (Bear in mind that you'll

// need to write a decoder in your shader).

for (offset=0; offset<typedData.length; offset++) {

imgd[offset*4] = typedData[offset];

imgd[(offset*4)+1] = 0;

imgd[(offset*4)+2] = 0;

imgd[(offset*4)+3] = 0;

}

// Fill the rest with zeroes (not strictly necessary, especially

// as we could probably get away with a non-power-of-two width for

// this type of shader use

for (offset=typedData.length*4; offset < canvasWidth; offset++) {

imgd[offset] = 0;

}

I made my life easy by just having 8-bit integer values stored in a 32-bit long, as this maps nicely to the 4-bytes used in an (R,G,B,A) canvas. Storing bigger integer or float values, or packing these 8-bit values, could be done with a few more lines. One potential gotcha could be that the typed array values are represented in whatever the host machine's native architecture dictates, so just simply dumping byte values directly into the ImageData object could have "interesting" effects on alternative hardware platforms.

Convert the canvas into a texture

createTexture() concludes by converting the canvas/ImageData into a texture

// Convert to WebGL texture

myTexture = gl.createTexture();

gl.bindTexture(gl.TEXTURE_2D, myTexture);

gl.texImage2D(gl.TEXTURE_2D,

0,

gl.RGBA,

gl.RGBA,

gl.UNSIGNED_BYTE,

img);

/* These params let the data through (seemingly) unmolested - via

* http://www.khronos.org/webgl/wiki/WebGL_and_OpenGL_Differences#Non-Power_of_Two_Texture_Support

*/

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MIN_FILTER, gl.LINEAR);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_WRAP_S, gl.CLAMP_TO_EDGE);

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_WRAP_T, gl.CLAMP_TO_EDGE);

gl.bindTexture(gl.TEXTURE_2D, null); // 'clear' texture status

}

The main lines to note are the gl.texParameteri() calls, which are to tell WebGL not to do any of the usual texture processing stuff like mipmapping. As we want to use the original fake (R,G,B,A) values unmolested, the last thing we want is for OpenGL to try to be helpful and feeding our shader code some modified version of these values.

EDIT 2012/07/10: I found the example parameter code above wasn't enough for another program I was working on. Adding an addition setting for gl.TEXTURE_MAX_FILTER fixed it:

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MAX_FILTER, gl.NEAREST);

I suspect gl.NEAREST might be generally better than gl.LINEAR for this sort of thing - however I haven't done thorough tests to properly evaluate this.

Extract the data from the texture

Just for completeness, here's the mundane code to pass the texture from JavaScript to the shader:

gl.activeTexture(gl.TEXTURE0);

gl.bindTexture(gl.TEXTURE_2D, myTexture);

gl.uniform1i(gl.getUniformLocation(shaderProgram, "uSampler"), 0);

Of more interest is the shader code to reference the "array element" from our pseudo-texture:

uniform sampler2D uSampler;

...

void main(void) {

float myIndex = mod(floor(gl_FragCoord.y), 16.0);

// code for a 16x1 texture...

vec4 fourBytes = texture2D(uSampler, vec2(floatIndex/16.0, 0.0));

...

This now gives us four floats which we can convert into something more usable. Note that as we are (ab)using the RGBA space, remember that these four values are in the range 0.0 to 1.0.

Decode the data

Now, this is where I cheated somewhat in my test code, as I just used the RGBA value pretty much as-was to paint a pixel:

gl_FragColor = vec4(fourBytes.r, fourBytes.g, fourBytes.b, 1.0);

To turn it back into an integer, I'd need something along the lines of:

int myInt8 = int(fourBytes.r * 255.0);

int myInt16 = int(fourBytes.r * 65536.0) + int(fourBytes.g * 255.0);

The above code is untested, and I suspect 65536 might be the wrong value to multiply by - it could be (255*255) or (256*255). In a similar vein, a more intelligent packing system could have got 4 different 8-bit values into a single texel, pulling out the relevant (R,G,B,A) value as appropriate.

I wouldn't be surprised if some of the details above could be improved, as this seems a very long-winded way of doing something that seems like it should be completely trivial. However, as it stands, it should at least get anyone suffering the same issues as me moving forward.

Now, to get back to the code I originally wanted to write...